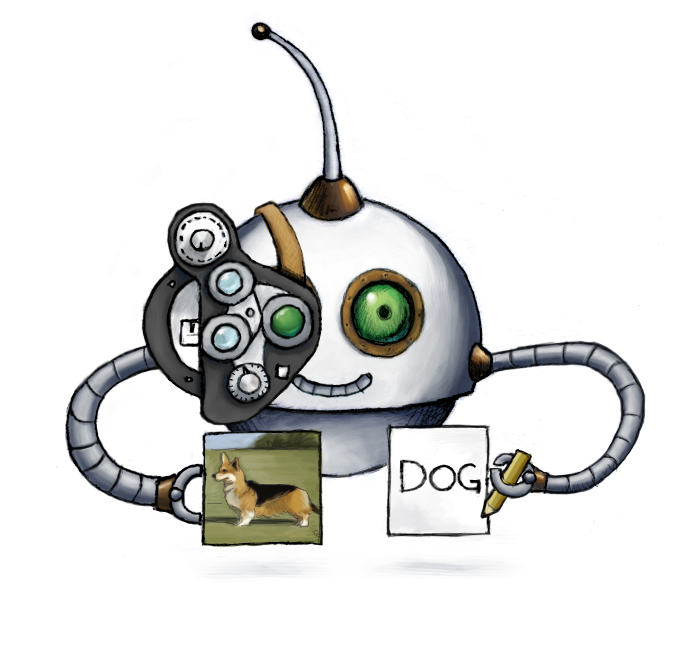

Recognize objects in images

🤖/image/describe recognizes objects in images and returns them as English words.

You can use the labels that we return in your application to automatically classify images. You can also pass the labels down to other Robots to filter images that contain (or do not contain) certain content.

Warning: Transloadit aims to be deterministic, but this Robot uses third-party AI services. The providers (AWS, GCP) will evolve their models over time, giving different responses for the same input images. Avoid relying on exact responses in your tests and application.

Usage example

Recognize objects in an uploaded image and store the labels in a JSON file:

{

"steps": {

"described": {

"robot": "/image/describe",

"use": ":original",

"provider": "aws"

}

}

}

Parameters

-

useString / Array of Strings / Object requiredSpecifies which Step(s) to use as input.

-

You can pick any names for Steps except

":original"(reserved for user uploads handled by Transloadit) -

You can provide several Steps as input with arrays:

"use": [ ":original", "encoded", "resized" ]

💡 That’s likely all you need to know about

use, but you can view Advanced use cases. -

-

providerStringrequiredWhich AI provider to leverage. Valid values are

"aws"and"gcp".Transloadit outsources this task and abstracts the interface so you can expect the same data structures, but different latencies and information being returned. Different cloud vendors have different areas they shine in, and we recommend to try out and see what yields the best results for your use case.

-

granularityString ⋅ default:"full"Whether to return a full response (

"full") including confidence percentages for each found label, or just a flat list of labels ("list"). -

formatString ⋅ default:"json"In what format to return the descriptions.

"json"returns a JSON file."meta"does not return a file, but stores the data inside Transloadit's file object (under${file.meta.descriptions}) that's passed around between encoding Steps, so that you can use the values to burn the data into videos, filter on them, etc.

-

explicit_descriptionsBoolean ⋅ default:falseWhether to return only explicit or only non-explicit descriptions of the provided image. Explicit descriptions include labels for NSFW content (nudity, violence, etc). If set to

false, only non-explicit descriptions (such as human or chair) will be returned. If set totrue, only explicit descriptions will be returned.The possible descriptions depend on the chosen provider. The list of labels from AWS can be found in their documentation. GCP labels the image based on five categories, as described in their documentation.

For an example of how to automatically reject NSFW content and malware, please check out this blog post.

Demos

- Generate a slideshow from AI-filtered images

- Recognize and reject certain objects in images

- Recognize and reject nudity in images (NSFW content)

Related blog posts

- Tech preview: new AI Robots for enhanced media processing February 17, 2020

- Introducing the OCR Robot for easy text extraction August 26, 2021

- Celebrating transloadit’s 2021 milestones and progress January 31, 2022

- Building an alt-text to speech generator with Transloadit May 9, 2022

- How to automate content moderation using Transloadit (NSFW) July 25, 2022

- Use Transloadit to automatically filter NSFW images July 17, 2024